ChainerMN on AWS with CloudFormation

Japanese version is here

AWS CloudFormation a service which helps us to practice Infrastructure As Code on wide varieties of AWS resources. AWS CloudFormation provisions AWS resources in a repeatable manner and allows us to build and re-build infrastructure without time-consuming manual actions or write custom scripts.

Building distributed deep learning infrastructure requires some extra hustle such as installing and configuring deep learning libraries, setup ec2 instances, and optimization for computational/network performance. Particularly, running ChainerMN requires you to setup an MPI cluster. AWS CloudFormation helps us automating this process.

Today, We announce Chainer/ChainerMN pre-installed AMI and CloudFormaiton template for ChainerMN Cluster.

This enables us to spin up a ChainerMN cluster on AWS and run your ChainerMN tasks instantly in the cluster.

This article explains how to use them and how you can run distributed deep learning with ChainerMN on AWS.

Chainer AMI

The Chainer AMI comes with Chainer/CuPy/ChainerMN, its families (ChianerCV and ChainerRL) and CUDA-aware OpenMPI libraries so that you can run Chainer/ChainerMN workloads easily on AWS EC2 instances even on ones with GPUs. This image is based on AWS Deep Learning Base AMI.

The latest version is 0.1.0. The version includes:

- OpenMPI version

2.1.3- it was built only for

cuda-9.0.

- it was built only for

- All Chainer Families (they are built and installed against both

pythonandpython3environment)CuPyversion4.1.0Chainerversion4.1.0,ChainerMN, version1.3.0ChainerCVversion0.9.0ChainerRLversion0.3.0

CloudFormation Template For ChainerMN

This template automatically sets up a ChainerMN cluster on AWS. Here’s the setup overview for AWS resources:

- VPC and Subnet for the cluster (you can configure existing VPC/Subnet)

- S3 Bucket for sharing ephemeral ssh-key, which is used to communicate among MPI processes in the cluster

- Placement group for optimizing network performance

- ChainerMN cluster which consists of:

1master EC2 instanceN (>=0)worker instances (via AutoScalingGroup)chaineruser to run mpi job in each instancehostfileto run mpi job in each instance

- (Option) Amazon Elastic Filesystem (you can configure an existing filesystem)

- This is mounted on cluster instances automatically to share your code and data.

- Several required SecurityGroups, IAM Role

The latest version is 0.1.0. Please see the latest template for detailed resource definitions.

As stated on our recent blog on ChainerMN 1.3.0, using new features (double buffering and all-reduce in half-precision floats) enables almost linear scalability on AWS even at ethernet speeds.

How to build a ChainerMN Cluster with the CloudFormation Template

This section explains how to setup ChainerMN cluster on AWS in a step-by-step manner.

First, please click the link below to create AWS CloudFormation Stack. And just click ‘Next’ on the page.

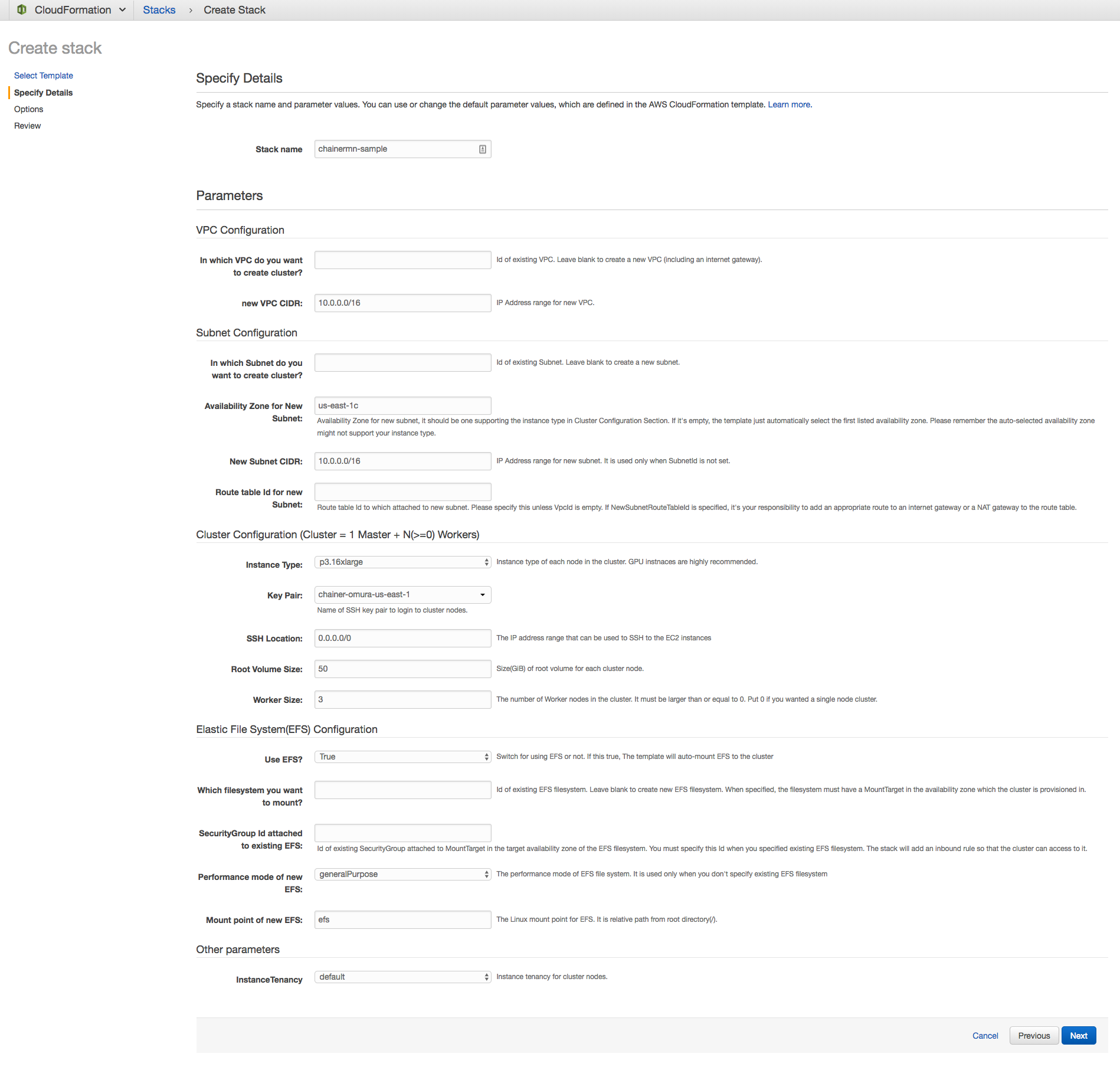

In “Specify Details” page, you can configure parameters on stack name, VPC/Subnet, Cluster, EFS configurations. The screenshot below is an example for configuring 4 p3.16xlarge instances, each of which has 8 NVIDIA Tesla V100 GPUs.

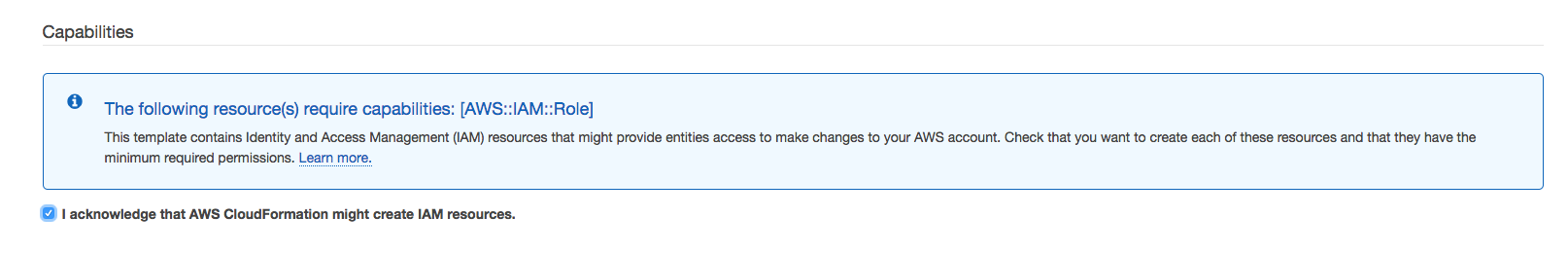

At the last confirmation page, you will need to check a box in CAPABILITY section because this template will create some IAM roles for cluster instances.

After several minutes (depending on cluster size), the status of the stack should converge to CREATE_COMPLETE if all went well, meaning your cluster is ready. You can access the cluster with ClusterMasterPublicDNS which will appear in the output section of the stack.

How to run ChainerMN Job in the Cluster

You can access the cluster instances with keypair which was specified in template parameter.

ssh -i keypair.pem [email protected]

Because Chainer AMI comes with all required libraries to run Chainer/ChainerMN jobs, you only need to download your code to the instances.

# switch user to chainer

ubuntu@ip-ww-xxx-yy-zzz$ sudo su chainer

# download ChainerMN's train_mnist.py into EFS

chainer@ip-ww-xxx-yy-zzz$ wget https://raw.githubusercontent.com/chainer/chainermn/v1.3.0/examples/mnist/train_mnist.py -O /efs/train_mnist.py

That’s it! Now, you can run MNIST example with ChainerMN by just invoking mpiexec command.

# It will spawn 32 processes(-n option) among 4 instances (8 processes per instance (-N option))

chainer@ip-ww-xxx-yy-zzz$ mpiexec -n 32 -N 8 python /efs/train_mnist.py -g

...(you will see ssh warning here)

==========================================

Num process (COMM_WORLD): 32

Using GPUs

Using hierarchical communicator

Num unit: 1000

Num Minibatch-size: 100

Num epoch: 20

==========================================

epoch main/loss validation/main/loss main/accuracy validation/main/accuracy elapsed_time

1 0.795527 0.316611 0.765263 0.907536 4.47915

...

19 0.00540187 0.0658256 0.999474 0.979351 14.7716

20 0.00463723 0.0668939 0.998889 0.978882 15.2248

# NOTE: above output is actually the output of the second try because mnist dataset download is needed in the first try.

About Chainer

Chainer is a Python-based, standalone open source framework for deep learning models. Chainer provides a flexible, intuitive, and high performance means of implementing a full range of deep learning models, including state-of-the-art models such as recurrent neural networks and variational autoencoders.

Recent Posts

- Chainer/CuPy v7 release and Future of Chainer

- Chainer/CuPy v7のリリースと今後の開発体制について

- Sunsetting Python 2 Support

- Released Chainer/CuPy v6.0.0

- ChainerX Beta Release

- Released Chainer/CuPy v5.0.0

- ChainerMN on AWS with CloudFormation

- Open source deep learning framework Chainer officially supported by Amazon Web Services

Categories

- General (12)

- Announcement (10)